Chapter 3 – How a reliable Jenkins setup should look like?

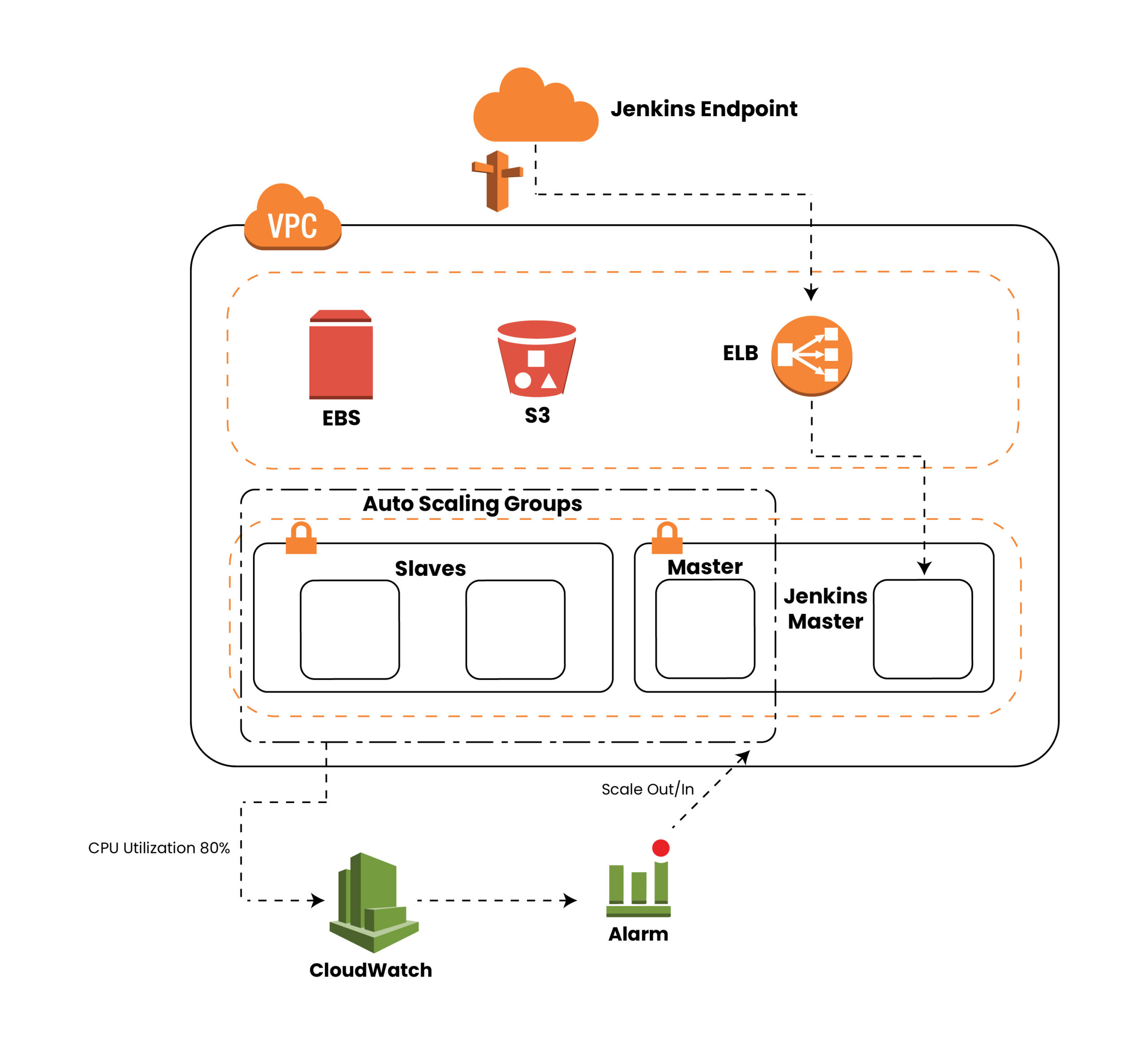

We’ve seen that the traditional Jenkins setup has many disadvantages and can impact the release management process badly.In below topics, will see how a scalable and self-reliant Jenkins setup will look like and will assume the setup to be hosted on AWS. The same goes true for any cloud service provider, here we are assuming AWS as a reference.

Topic is further divided into 2 subtopics for better understanding.

3.1 Highly available and highly scalable Jenkins architecture

Above picture shows the architecture diagram of cloud hosted Jenkins. Here we can launch on-demand Jenkins slaves as and when new build requests are in the queue and also self-healed Jenkins master too. This makes Jenkins highly scalable and highly available.

As you can see, that architecture relies on two autoscaling groups, one for Jenkins master and another one for Jenkins Slaves, both can be in the same or different AZs; this varies from one’s requirement. Using auto scaling groups in this case would ensure high availability of master/slaves at any given point of time. Periodic EBS snapshot and use of S3 for data storage makes the whole setup flexible and easy to re-launch in case of any failure or in the event of scale in/out.

The whole setup, should be extended with a proper monitoring/logging solution, this will make Jenkins infrastructure more reliable and secure. In the event of natural disaster also the same setup can be up in minutes from snapshots, further use of cloudformation is always advised here.

In the next chapter will analyse the cloud architecture in detail and list out benefits of going cloud over on-prem solution.

Highly Available

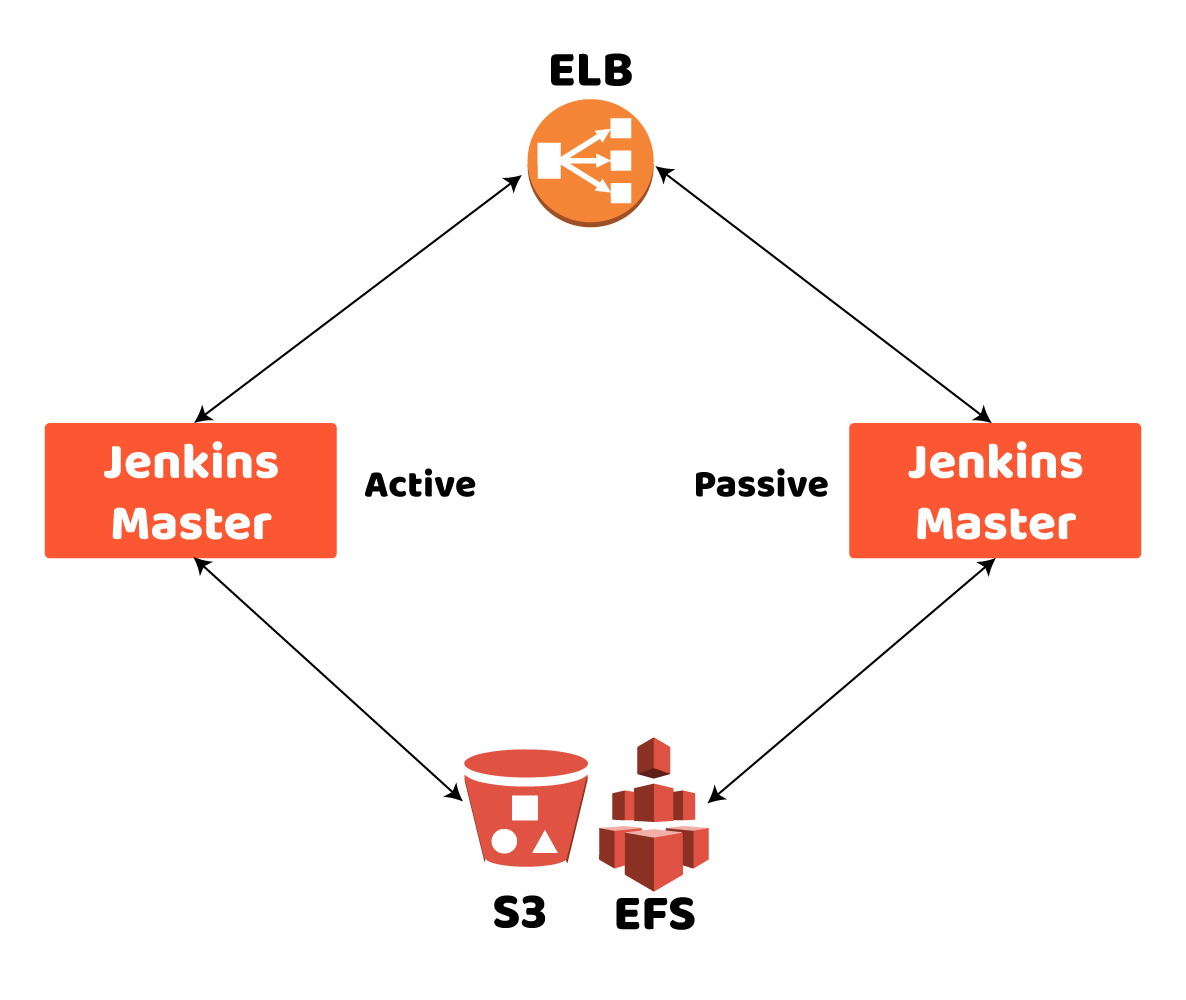

Our cloud hosted will look like this

In cloud hosted setup, it’s easy to set up Jenkins master in an active/passive manner or in an auto scaled configuration so Whenever there is a problem with the active master and it becomes unavailable, the other master will be provisioned automatically and/or become active and jobs will resume. These jobs will then be served by the master that has become active. ELB communicates with instances with the heartbeats to see if the master is alive. The same can be achieved on-prem but in that case we do need extra hardware to be on standby but the biggest drawback here is that extra piece of hardware will be lying un-utilized when everything is working fine.In any cloud solution we don’t need to worry about this and can properly utilized the provisioned resources.

Also you can keep all the data on central location so when your setup in auto scaled mode; all the data and configuration are available to new jenkins machines.

Highly Scalable and Auto Scaling

When organization scales up in terms of services, it’s become need of an hour to roll out changes to production in almost no-time to ensure high TTM. Using Jenkins itself is one of the factors in achieving high TTM. furthermore scaling in master itself can be in terms of either “vertical” or “horizontal”.

Vertical growth is when a master’s load is increased by having more configured jobs or executing builds more frequently. Using cloud hosted setup would be an easy solution in this case, when you can launch an instance with higher capacity in minutes.

Horizontal growth is the creation of additional master machines in whole setup to accommodate new jobs or projects, rather than adding these things to an existing single master. Many organizations prefer to have multiple setups for easiness of maintenance per set of applications or domain wise allocation, keeping this would be easy in the cloud as compared to on-prem where provisioning time is much higher.

Now when you need to scale up or down your Jenkins setup based on your need, be it master or slave;that can be achieved easily with services available in cloud providers. For example in AWS you can make use of cloudformation and auto scaling groups. Cloudwatch can monitor it’s usage at regular intervals and a new server is provisioned accordingly.

Important note here is that this is not default Jenkins setup, and devops engineers should be aware of other cloud services.

Manual management of slaves

When auto scaling is enabled in the cloud, you don’t need to worry about slaves being on and off, as and when load is increased new slaves are provisioned with pre-configured snapshot and start executing jobs managed by master. And when eventually peak time is over, the same slaves will be destroyed. This will ensure a high number of slaves during peak time and only a reasonable number in off-peak hours. The same would not be possible at all in on-prem when provisioning new slaves may need to go through the procurement cycle too.

Also OS level updates of slave machines itself is easy in the cloud, just do it once and have it backed up, new slaves will use the same configuration when provisioned.

Disaster Recovery easiness

Being cloud setup, it’s easy to set up things in multiple availability zones(regions) and to switch regions as well in case of any natural calamities in a particular area, the same would not be possible with on-prem solution. In the event of natural disaster, on-prem setup would crash and business would not be able to roll out any changes. You can move backup volumes and snapshots to a new availability zone in case of the primary availability zone failure without a need of configuring all the things from scratch and thus minimize the impact.

Snapshot backups of the Jenkins setup(or Jenkins home directory you can say) can provide a level of fault tolerance, and offer an effective solution for system-wide backups. We can make use of numerous plugins to make system backups.

Varieties of computing resources

In the cloud, you’ll have instances available with different computing capacities, whereas on-prem you’ll have to live with already procured resources, upgrading would not be an easy process and again time consuming as it may have to go via procurement cycle. Also in case if full capacity is not utilized you can’t downgrade the machine also.Upgrading or downgrading the same resources would not take much time in the cloud setup and again it can be done anytime based on your need.

Costs Savings (pay as you go)

Most cloud providers have a pay as you go plan available so upgrade/downgrade or autoscaling would not incur high fixed cost, as and when load increases or decreases just provision what exactly you want and pay for that time only and not for the full time.

Backup Persistence

All the cloud resources(data, setup snapshots) can be backuped manually or automatically at regular intervals; and can be used in case of any disaster or to create a new setup for any other applications or for new domains.So you never lose any data on the cloud. The same can be achieved with a different set of tools but again configuring and managing the backup process would be too cumbersome on on-prem.

Automatic restore from volumes can be also processed as per your need of auto scaling.

Encryption and Security

Security is one of the important aspects for any organization, putting data on the cloud needs a thought but now-a-days enhanced security services and mechanisms are offered by cloud providers.

For example in AWS, you can make use of private/public subnet along with VPC, create encrypted volumes as a snapshot and backups. Make use of Network ACLs and Security groups for access to instances and Identity and permissions are managed using IAM Roles and IAM policies.

Enabling multi factor authentication or integrating the whole access along with your corporate directory can be done.Also can make use of monitoring services like Cloudtrail to see access logs and take appropriate actions.

Chapter 4 Conclusion

Each Solution has their advantages(or disadvantages) and certain limitations specifically for on-prem, which one you choose depends on the need of your organization along with cost.

However the above analysis would surely help you to make an informed decision.Please ensure you’ve done due diligence and consider all the factors mentioned above while making a choice.